How to Win Budget and Security Approval for AI

Expert advice from Raegan Wilson (VP of Ecosystem Solutions & Innovation, Spur Reply), Tyler Calder (CMO, PartnerStack), Cody Sunkel (CRO & Co-Founder, Partner Fleet), and Justin Zimmerman (Founder, Partnerplaybooks)

Table of Contents

- Snapshot

- Why most partner tech decisions go sideways

- The partner tech gap you cannot see on a demo

- The readiness model: people, program, process, and partners

- Before you ask for more budget, optimize what you already have

- How to build a case for change that gets approved

- The identify, define, decide framework for buying partner tech

- What alignment really means inside your company

- Three AI workflows partner teams are actually using

- What AI visibility means for partner marketing

- Why tech partnerships need a different type of platform

- The KPIs tech partner teams should care about more

- Recommended tools

- FAQs

- Conclusion

Snapshot

You are not just choosing software anymore. You are choosing how your partner team will operate, how quickly you can move, how cleanly data will flow across teams, and whether AI will become a force multiplier or just another expensive distraction. That is the big shift happening right now.

Across partnerships, ecosystems, and go-to-market teams, there is no shortage of ideas. Every week brings another autonomous workflow, another AI assistant, another promise that a new tool will save hours and unlock growth. But most teams do not fail because the software is bad. They fail because the program is not ready, the process is unclear, the data is unreliable, or internal teams were never aligned in the first place.

The opportunity is real. So is the risk. If you get the foundation right, you can automate the right things, improve partner experience, and earn executive support with confidence.

If you want to solve internal misalignment, weak tool adoption, and fuzzy ROI, keep reading to see how Raegan Wilson and Tyler Calder can help you do it.

“Most organizations don’t really start with the technology problem. They start with the readiness problem.” -Raegan Wilson

Why most partner tech decisions go sideways

If you work in partnerships, you are probably feeling the pressure from two directions at once.

On one side, there is a flood of new AI-powered workflows, partner automation tools, PRMs, marketplaces, and ecosystem platforms. On the other side, there is a very real purchasing environment where governance, IT, procurement, security, and leadership all want a say in what gets connected to your CRM and your data.

That creates a problem many partner teams know well. You may know exactly what you want to improve, but getting from idea to approved implementation is a separate skill set. And if you miss that part, you can spend months evaluating tools that never make it through internal review.

Raegan’s framing is useful because it cuts through the noise. The question is not just, “Is this AI workflow cool?” It is, “Is your team ready for it, can the business support it, and will it actually solve the problem you think it solves?”

If you are making PRM or ecosystem decisions this year, it helps to compare categories with a more grounded framework. Partner Playbooks has also published a practical resource on choosing your next PRM that fits nicely alongside the buying approach outlined here.

“Without addressing the things that are below the surface, buying new partner tech just moves the mess into a different interface.” -Raegan Wilson

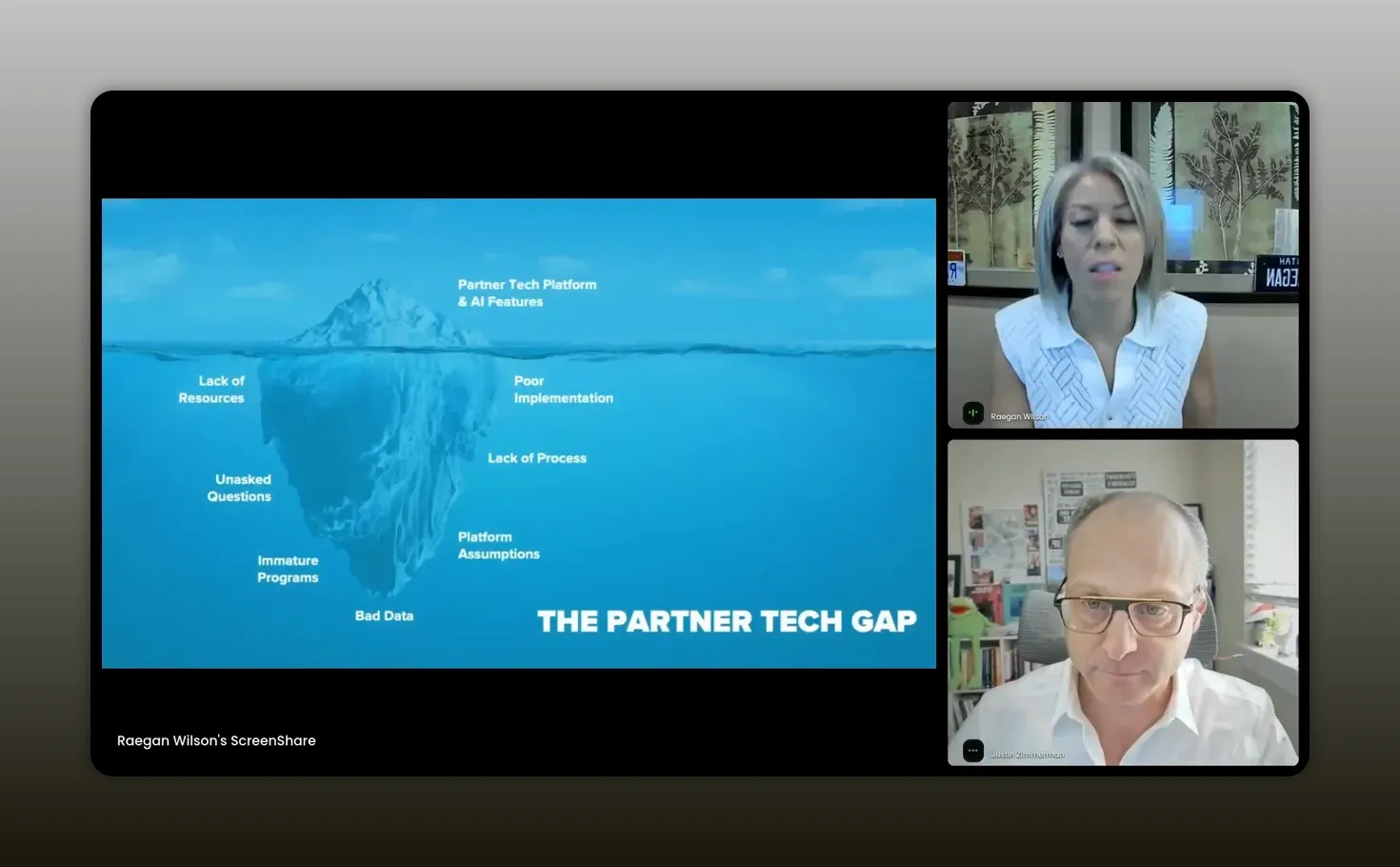

The partner tech gap you cannot see on a demo

One of the strongest ideas Raegan shared is the “partner tech gap.” It is the difference between the shiny things you can see and the operational realities you usually cannot.

Above the surface, everything looks promising:

- smart AI features

- slick partner portals

- automated lead flows

- recruitment workflows

- marketplace capabilities

Below the surface is where implementations either succeed or fail:

- lack of resourcing

- unclear ownership

- weak change management

- missing processes

- bad or incomplete partner data

- immature partner programs

- misaligned expectations between buyer and vendor

That last point matters more than most teams realize. Raegan called out how often buyers and vendors use the same words to mean different things. A platform vendor may say “automation,” “recruitment,” “attribution,” or “partner onboarding,” but unless you get very specific, you may discover later that your exact workflow is not actually supported.

That is why her advice is so simple and so good: if you do not see it in the demo, it does not exist.

That does not mean vendors are being dishonest. It means assumptions are expensive. The fix is disciplined discovery.

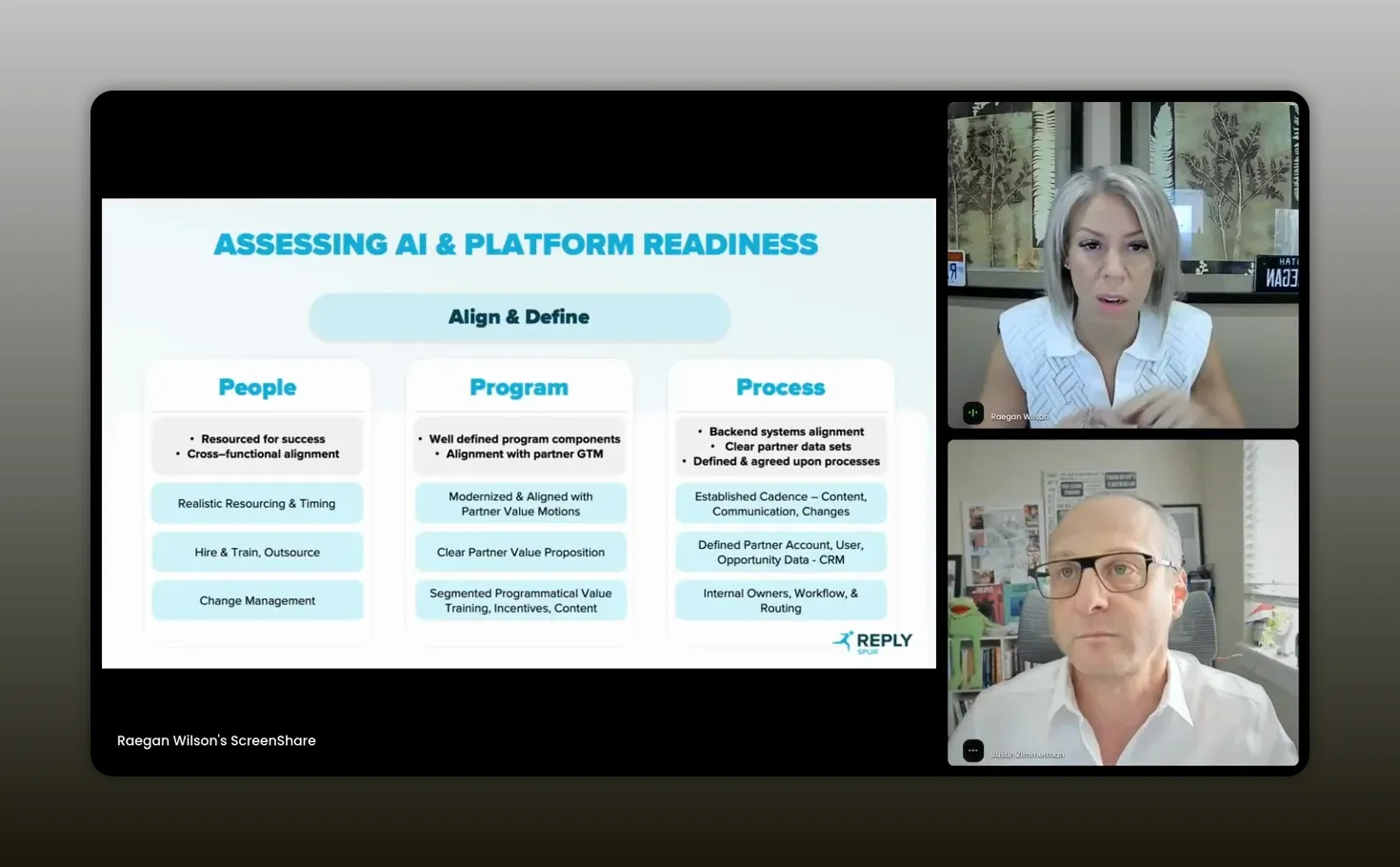

The readiness model: people, program, process, and partners

Before platform comes readiness.

Raegan breaks readiness into a practical set of building blocks. She talks about people, program, and process, and adds a fourth important “P” as well: partners.

1. People

You still need humans. That sounds obvious, but teams miss it all the time.

Someone has to own implementation. Someone has to own the platform after launch. Someone has to handle change management, internal communications, external rollout, and long-term optimization. Most teams do not magically have 40 extra hours a week to absorb all of that.

So ask yourself:

- Who is running the implementation?

- Who owns the platform long term?

- Who manages change internally?

- Who supports partner-facing adoption?

- Do you need outside help?

2. Program

Your tooling should map to the way your partners actually create value.

A build partner, a referral partner, a reseller, and a services partner do not all need the same workflows. If your partner program is vague, your software requirements will be vague too. That usually leads to buying broad capabilities instead of solving the specific problem.

A well-defined program makes it easier to answer questions like:

- What partner motions matter most right now?

- What do those partners need from us?

- Where is the friction in their experience today?

- What business outcome are we trying to improve?

3. Process

You cannot automate what does not exist.

If your lead handoff, deal registration, content approval, onboarding, or reporting process lives partly in Slack, partly in someone’s head, and partly in a spreadsheet, the software is not the first problem. The process is.

Readiness here means:

- clean data

- defined workflows

- governance

- reporting standards

- a cadence for maintenance and optimization

4. Partners

You also need clarity on who your partners are and how they engage. It is hard to improve partner experience if you do not have a clean picture of your ecosystem in the first place.

Raegan’s suggestion is straightforward: score yourself on readiness. Rate your people, program, and process maturity from one to five. The low scores tell you where the real work is before you go shopping.

“We can’t automate things that don’t exist.” -Raegan Wilson

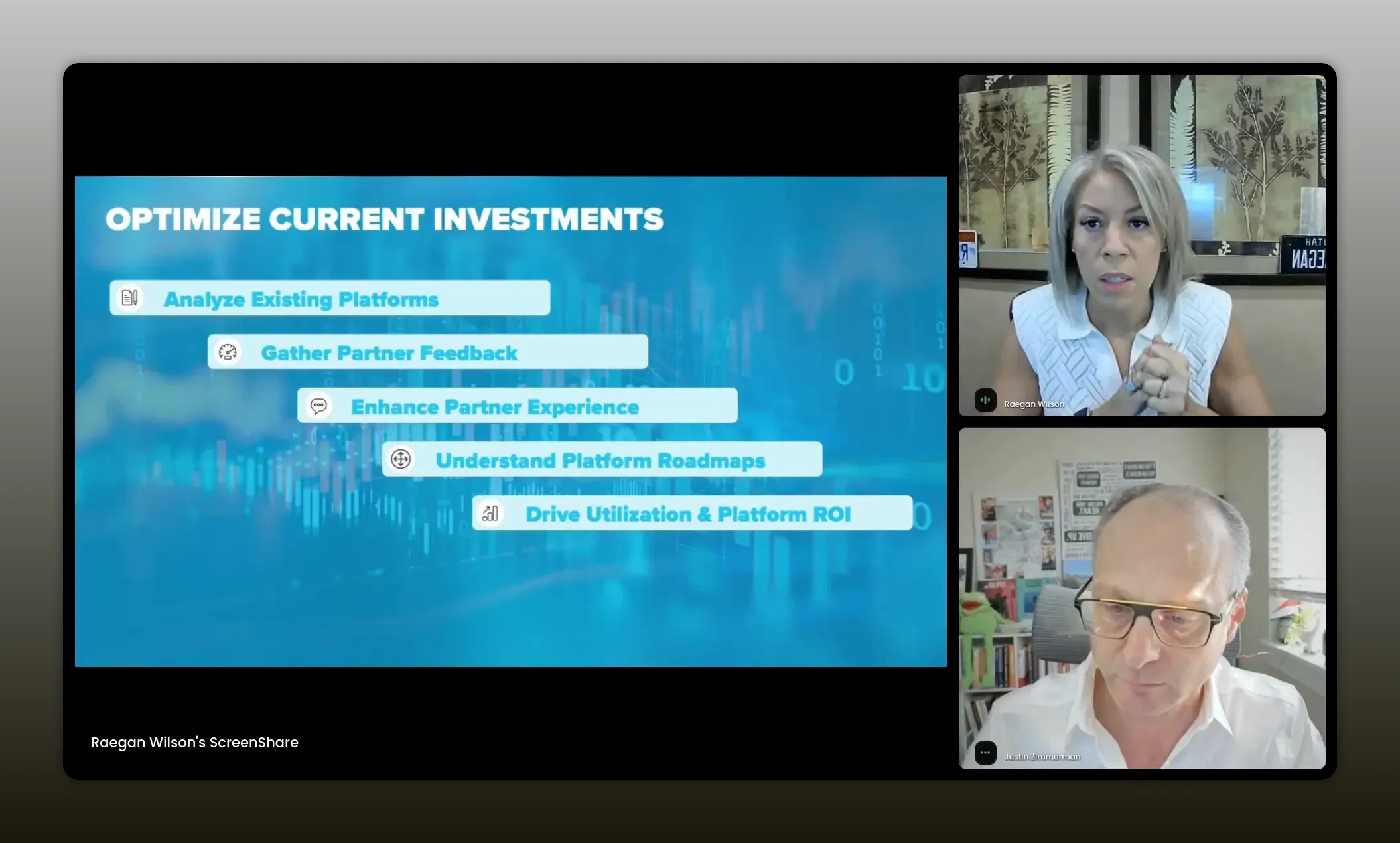

Before you ask for more budget, optimize what you already have

This is one of the more overlooked points in the whole session.

If you want leadership to fund a new platform, AI add-on, or partner workflow tool, you need to show that you have already taken your current stack seriously.

That means four things.

- Optimize existing tools. If you have a portal, PRM, marketplace, or data-sharing platform already in place, improve adoption and usage first.

- Gather partner feedback. You want to bring a real problem to leadership, not a guess.

- Understand the roadmap of your current vendors. Some features may already be coming, especially around AI, and may be easier to adopt within your current licensing model.

- Show utilization and ROI. Leaders are far more likely to fund the next step if you can demonstrate value from the last one.

That is especially true if you are already building a more disciplined data strategy. If you want another perspective on how partnerships, data, and AI fit together, this related piece on partnerships, data, and AI for revenue teams expands on that crawl-walk-run mindset.

“Before you ask for more budget, optimize what you already have—improve adoption, gather partner feedback, review your vendors’ roadmaps, and prove utilization and ROI from the last step.” -Raegan Wilson

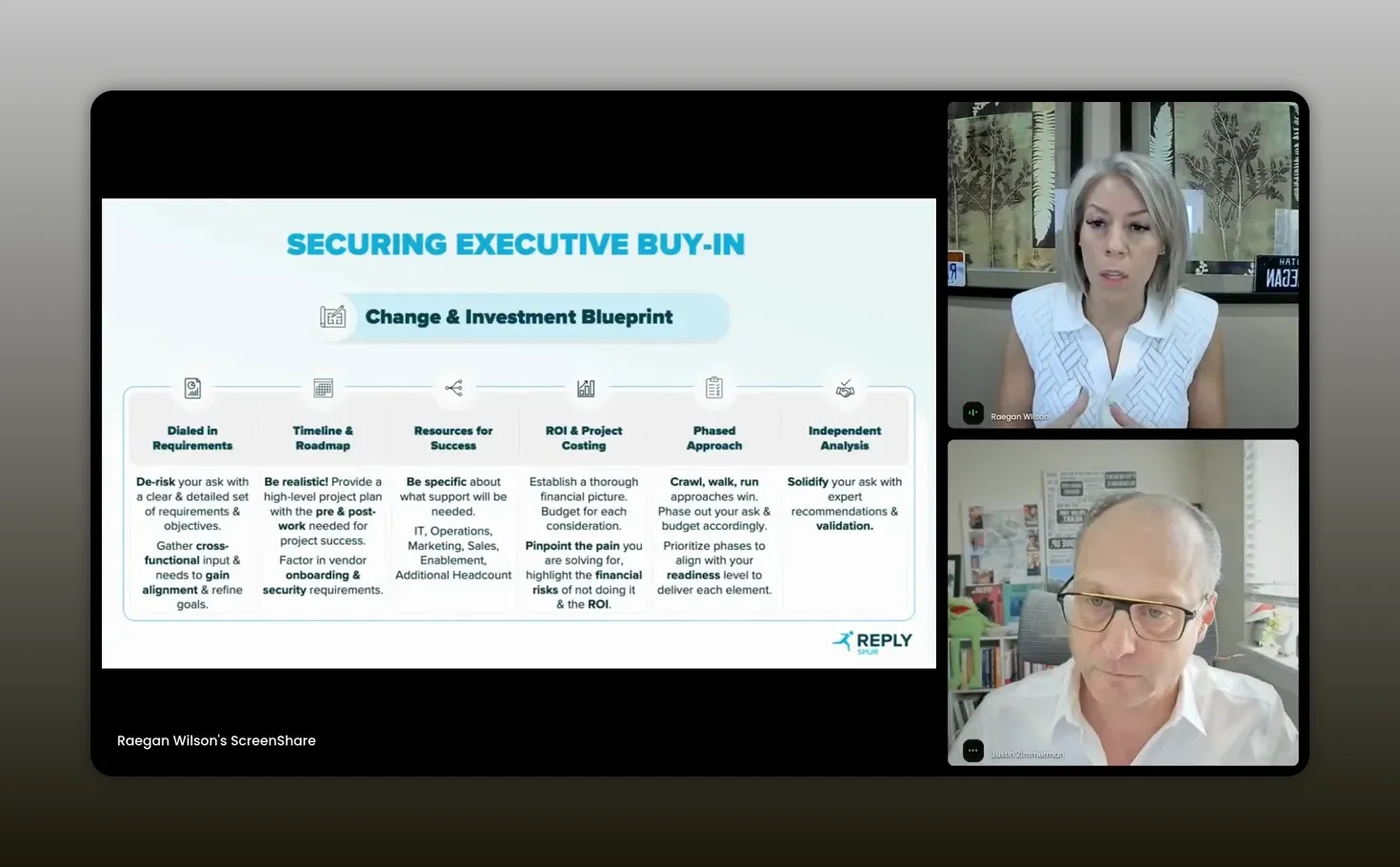

How to build a case for change that gets approved

Once you have the readiness work done, the next challenge is getting executive buy-in.

Raegan’s “case for change” framework is valuable because it is not built around hype. It is built around reducing risk.

Assess readiness first

Do not walk into a budget conversation with known internal gaps you have not addressed. If your team is not aligned or you do not know who owns implementation, leadership will sense the risk immediately.

Build tight requirements

This is where you define exactly what you are solving for. Not everything. Not the whole ecosystem. Not the future state of all partnerships forever. Just the clearest high-value problem in front of you.

A tight requirement set also makes vendor evaluation dramatically easier.

Use a realistic timeline

Teams constantly underestimate implementation time. Security review, procurement, vendor onboarding, IT integration work, training, change management, and staged rollout all take time.

That gets even more complex with AI. Some organizations now have AI review boards, stricter security checks, or additional legal review for how data is used. Raegan’s advice here is practical: in some cases, it is smarter to phase the AI component later if that helps the core vendor onboarding move faster.

Spell out the resource ask

If you need help from IT, operations, design, enablement, or a third-party consultant, say that clearly. Hidden resourcing needs become budget objections later.

Build a defensible financial model

You need to show the pain you are solving and the cost of doing nothing. What gets slower, leakier, or more expensive if you do not make this investment?

Also tie the ask to a company-level strategic priority. That could be growth, operational efficiency, retention, AI readiness, partner leverage, or expansion into a new channel. The more your request sounds like a company initiative instead of a team preference, the stronger it becomes.

Take a crawl, walk, run approach

One of the smartest ways to de-risk a tool purchase is not to ask for everything upfront. Phase the rollout. Start with the core capability, then add adjacent workflows later.

That applies to budget too. Smaller, staged investments are often easier to approve than a giant all-at-once transformation project.

“When we’re going to ask for executive buy-in, it really becomes a lot easier when we de-risk the ask.” -Raegan Wilson

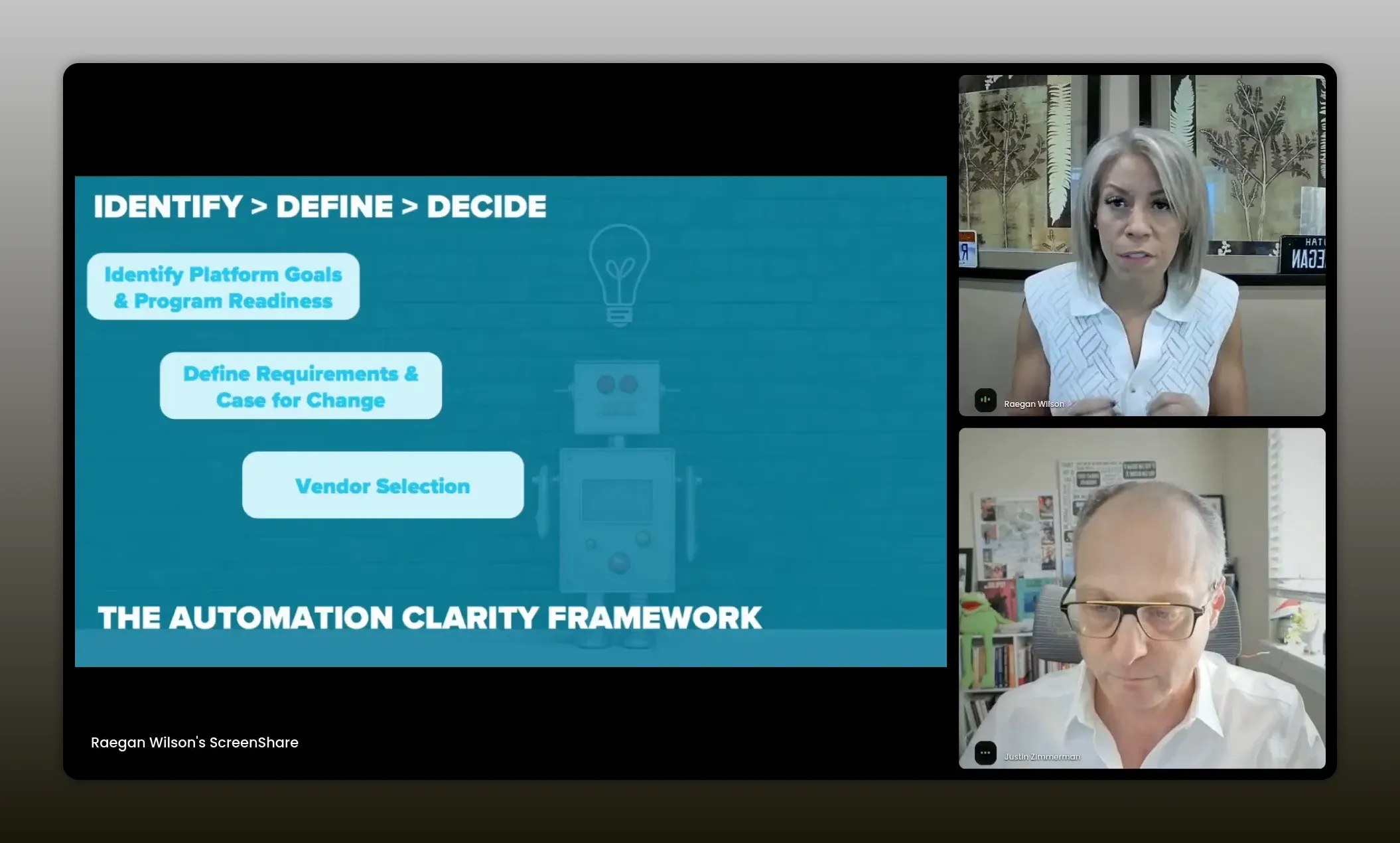

The identify, define, decide framework for buying partner tech

Raegan simplifies the full buying motion into three steps:

- Identify your platform goals and readiness level

- Define your requirements and case for change

- Decide on the vendor only after you have clarity

The order matters.

Many teams start at decide. They see a product, hear a good pitch, and start imagining how they could use it. But if you reverse the order and do the hard internal work first, the vendor decision gets cleaner and faster.

This is also how you remove emotion from the buying process. Instead of choosing based on who had the best demo or who used the most exciting AI language, you choose based on fit, proof, outcomes, and operational feasibility.

“Emotion is the enemy of a good vendor decision. In the identify, define, and decide framework, you take emotion out by anchoring on readiness, tightening the real requirement, and only deciding after you can prove the fit.” -Raegan Wilson

What alignment really means inside your company

When Justin asked Raegan what most often stops implementations from working, her answer was clear: lack of alignment across teams.

That is worth sitting with for a minute.

Partnership teams think about alignment all the time, but usually in the context of external partners. The deeper challenge is using those same partnership skills internally. You need alignment with IT, ops, sales, marketing, security, enablement, and leadership if the system you want is going to touch their work.

That means:

- bringing IT in early

- surfacing dependencies before the budget meeting

- being honest about who you need help from

- making the business case in language other teams care about

In other words, the path to better partner tech often starts by behaving like a great internal partner.

Three AI workflows partner teams are actually using

After Raegan laid out the buying framework, Tyler from PartnerStack showed what it looks like when AI is applied to real partner workflows instead of generic productivity claims.

He focused on three use cases that many partner teams struggle with right now:

- lead capture

- partner recruitment

- content marketplace activation

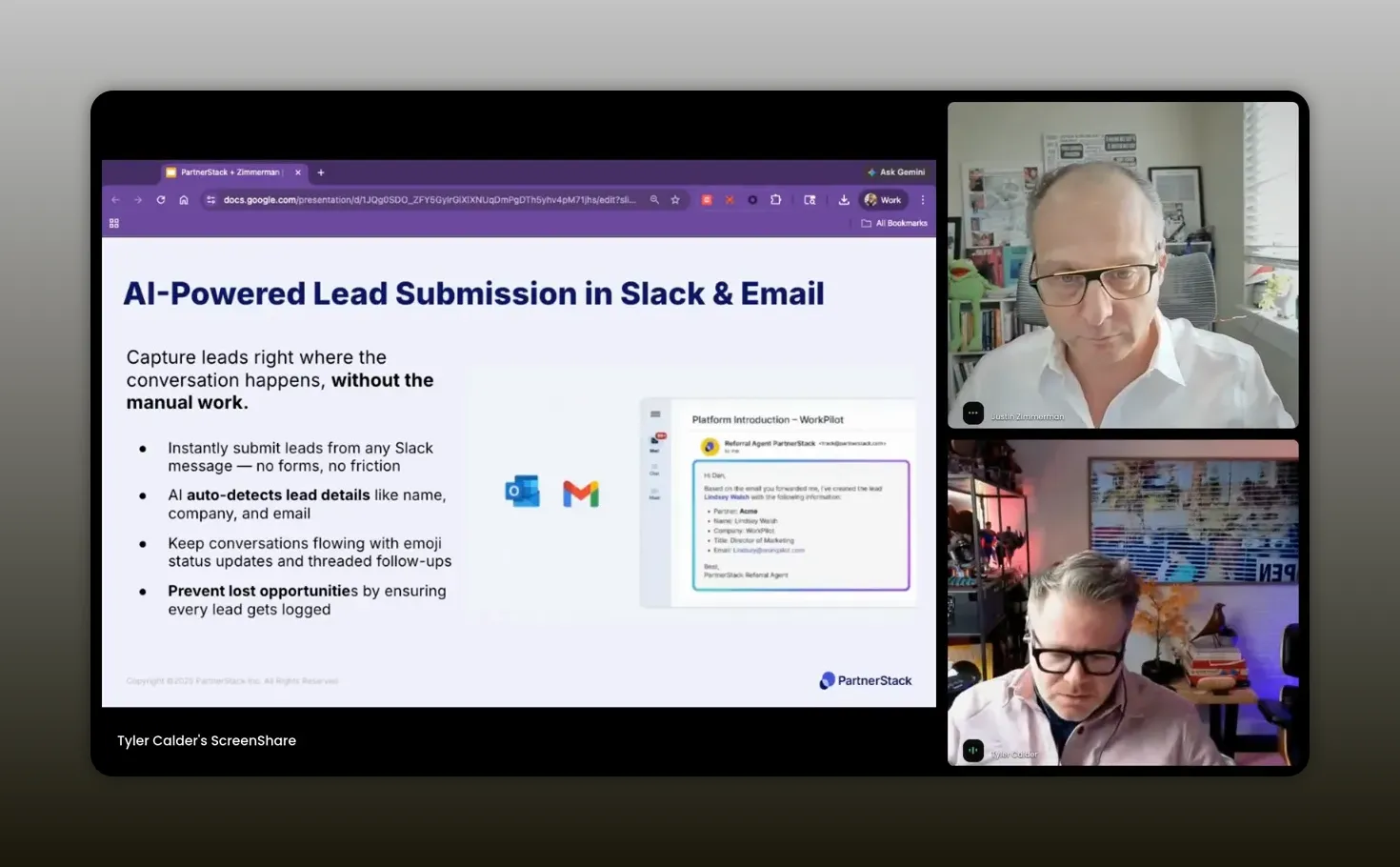

AI workflow #1: Frictionless lead capture

One of the biggest sources of leakage in partner programs is getting referred leads into the system accurately and quickly.

Partners do not always want to log into a portal just to submit a lead. They work in Slack. They work in email. They send quick messages. And if your process requires them to stop what they are doing and enter data in a separate place, some referrals will never get recorded properly.

Tyler described how PartnerStack handles this by recognizing when a lead is being referred through tools like Slack or email, pulling out the relevant information, organizing it, and pushing it into the platform.

The practical benefits are meaningful:

- less friction for partners

- more lead submissions

- better attribution accuracy

- less cleanup work for partner teams

He shared that this workflow has driven about a 12 percent increase in submitted leads, with improved partner attribution as an added upside.

“We want to work where our partners work. We want to make it simple. We want to remove any type of friction.” -Tyler Calder

AI workflow #2: Partner recruitment at scale

Partner recruitment is another area where teams burn time manually.

The challenge is not just finding more partners. It is finding the right partners without blasting generic outreach and hoping something sticks.

Tyler explained that PartnerStack uses AI to help define an ideal partner profile using existing partner performance and other signals. From there, the platform scans its network for likely fit partners and surfaces recommendations inside the product.

Then comes the action layer: outreach. Personalized messages can be generated in brand voice, or the platform’s recruit agent can run the outreach motion more autonomously.

That combination of fit identification and personalized outreach is what makes the workflow useful. It is not just prospecting. It is scaling the first pass of partner development work.

Tyler reported that this has led to roughly a 60 percent lift in recruiting ideal-fit partners.

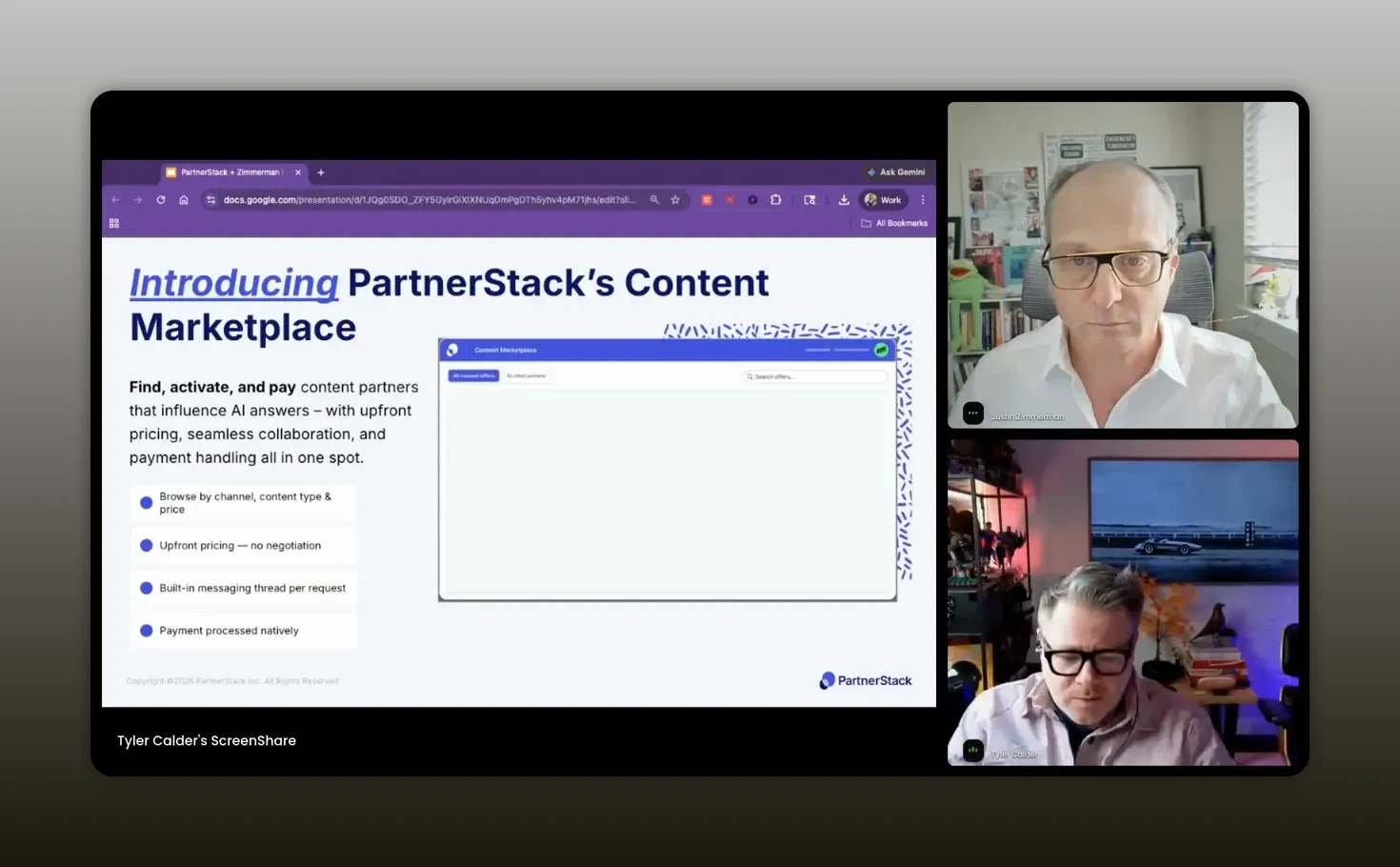

AI workflow #3: Content marketplaces and partner-created influence

This was probably the most strategically interesting section because it ties partnerships directly to AI visibility.

The basic idea is this: as more B2B buyers use large language models to start their research, your brand’s visibility inside those systems matters a lot. If you are not showing up in those results, or showing up inaccurately, you may not even make the shortlist.

Tyler pointed to a bigger shift in buying behavior. Many buyers now begin by asking AI tools for product recommendations, category comparisons, or shortlists. What those systems cite and trust is influenced heavily by third-party content.

That matters for partner teams because so much third-party content is partner-created content.

What AI visibility means for partner marketing

Here is the strategic leap Tyler made, and it is a good one: co-marketing is no longer only about sourced pipeline or campaign reach. It is also about whether your brand is represented across the content ecosystem that AI models use to understand your category.

He shared a few core ideas:

- A large majority of brand mentions in LLM results come from third-party domains, not your own site.

- That is similar to the old SEO distinction between on-page and off-page authority.

- Your owned content still matters, but third-party validation helps models trust your claims.

- Mid-tail and long-tail publishers can matter a lot, not just giant media sites.

That last point is especially important. Many teams assume they need only top-tier publishers like Forbes or PCMag to shape AI visibility. Tyler argued that niche and engaged partner publishers often play a major role too.

That is where a content marketplace approach comes in. If you can identify which domains are influencing your category in AI-generated results, and you can activate relevant partner content with those publishers, you can improve your share of voice in AI systems.

Tyler said this approach has driven around a 133 percent increase in share of voice for some programs.

If you are already thinking about partner ROI and program leverage, this is a strong reminder that modern partnership value includes influence in places your standard attribution models may not yet fully capture.

“If your brand isn’t showing up within the LLMs, there’s a good chance that you’re not even making it on the shortlist.” -Tyler Calder

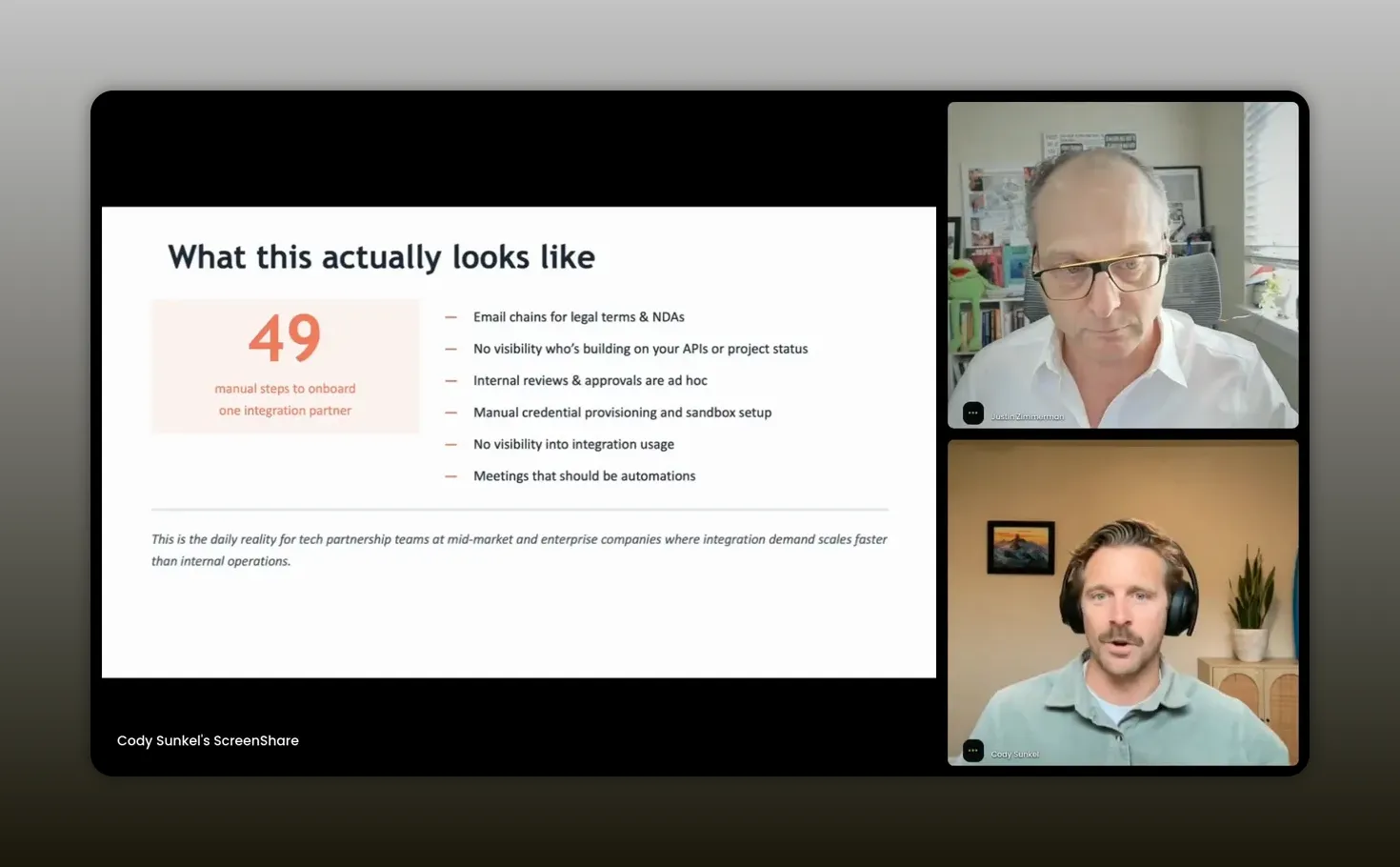

Why tech partnerships need a different type of platform

Cody from Partner Fleet picked up on an important distinction: not every partner team has the same operational needs.

If you are running tech partnerships, ISV motions, API-based ecosystems, or app marketplaces, traditional PRM categories often miss the mark.

Most partner platforms were built around:

- referral workflows

- reseller motions

- deal registration

- channel tiers

- co-sell enablement

- MDF and incentive management

Those may be useful, but they do not solve the specific workflow complexity of integration partnerships.

Cody outlined the gap clearly. Tech partnership teams often need support for:

- developer onboarding

- integration lifecycle management

- API credentialing and sandbox access

- app review and technical QA

- public partner marketplaces

- in-app marketplaces

- usage analytics and integration ROI

When those workflows are not supported by purpose-built tooling, the result is operational sprawl. One example he shared required 49 manual steps to onboard a single integration partner.

That is the kind of friction AI does not magically fix. If anything, AI can make the upstream problem worse by lowering the barrier for more builders to want access to your ecosystem while your internal approval flow remains painfully manual.

“We don’t think you’re understaffed. We think you’re under architected.” -Cody Sunkle

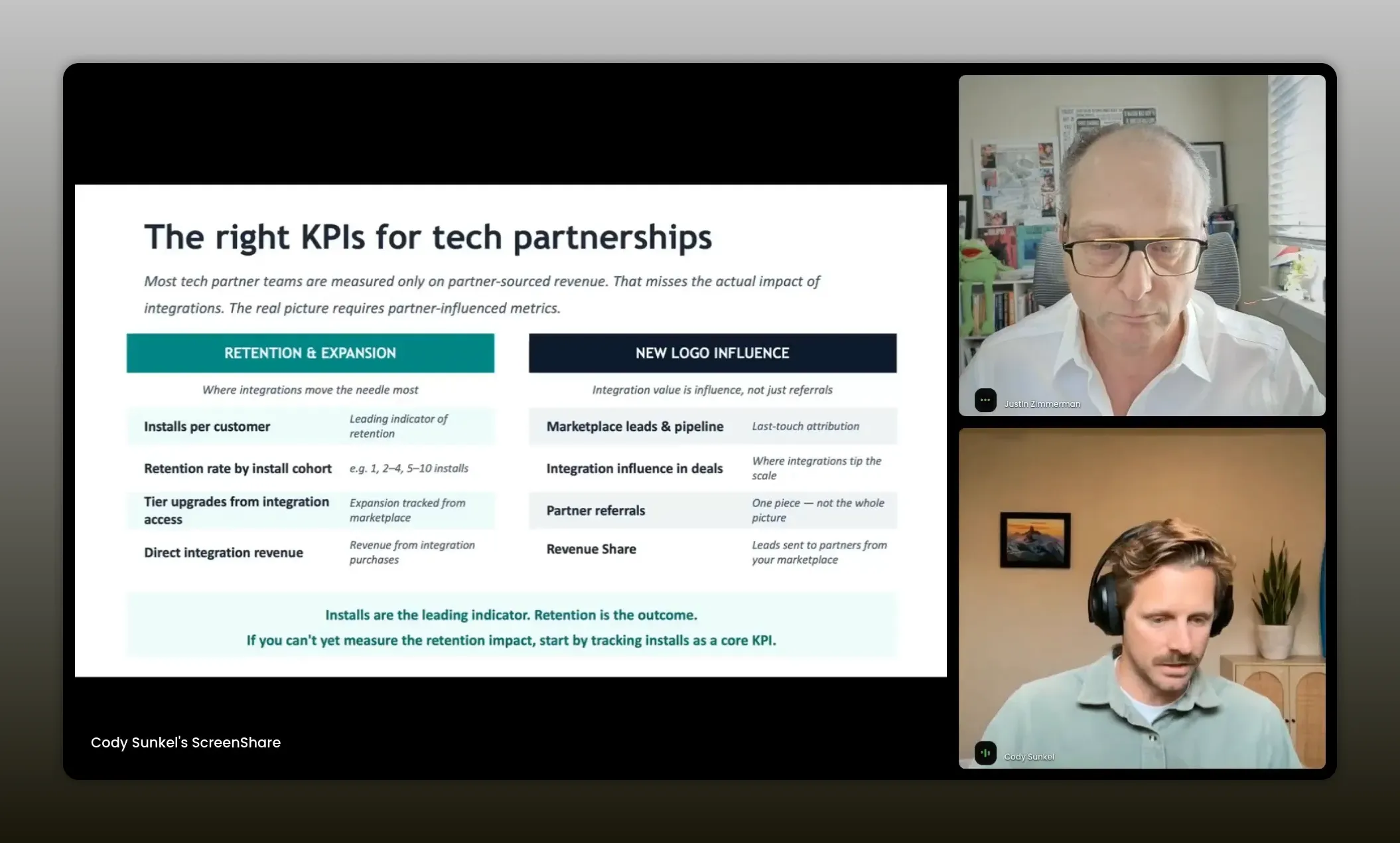

The KPIs tech partner teams should care about more

One of Cody’s strongest points was about measurement.

Too many tech partner teams are measured almost entirely on partner-sourced revenue. That can be part of the story, but it is usually not the whole story. For integrations, the real value often shows up through partner influence.

He recommends a broader KPI model across both retention and new logo impact.

Retention-side metrics

- integration installs per customer

- adoption by cohort

- tier upgrades tied to integration access

- direct revenue from paid integrations

New-logo metrics

- marketplace lead attribution

- integration influence in closed deals

- partner referrals

- revenue share generated through marketplace demand

This broader view is much more useful when you are trying to justify budget. If a customer with one integration retains at a meaningfully higher rate than a customer with none, that is not a side metric. That is business value.

Cody shared examples where customers saw major retention lifts as integration adoption increased, and where public marketplaces were generating large volumes of partner leads each year.

That lines up with a wider theme in ecosystem programs: if you only measure last-touch sourced revenue, you will miss much of what partnerships actually do. There is a helpful companion read on partnership KPIs and project discipline if you want to go deeper on that point.

“If you’re only tracking partner-sourced revenue, you’re missing the real KPI story for integrations. ” -Cody Sunkel

Recommended tools

The right stack depends on your partner motion, your team maturity, and what problem you are actually trying to solve. Based on the approaches discussed, here are the tool categories worth evaluating.

For partner program readiness and implementation planning

- Consulting and implementation support for requirements gathering, vendor selection, rollout planning, and change management

- Security and procurement workflows to speed vendor onboarding internally

For referral, affiliate, and co-sell motions

- PRM platforms with frictionless lead capture, partner recruitment, and attribution

- Messaging workflow integrations such as Slack and email capture

- Partner content and recruitment networks when scale matters

For AI visibility and partner-created demand

- AI visibility platforms to understand which third-party domains influence your category in LLMs

- Content marketplace capabilities to activate partner-created content where it matters

- Search and visibility tools like Semrush for broader discovery and competitive context

For tech partnerships and integration ecosystems

- Developer lifecycle platforms for onboarding and approvals

- Public marketplaces for demand capture and attribution

- In-app marketplaces for customer adoption and integration installs

- Product analytics and CRM reporting to connect integration usage to retention and expansion

The important point is not to buy every category. It is to match the platform to the operational bottleneck you are actually trying to remove.

FAQs

How do you know if your partner team is ready for AI tools?

Start by assessing readiness across people, program, and process. If you do not have clear ownership, a defined partner motion, clean data, and repeatable workflows, AI will likely amplify confusion instead of fixing it.

What is the biggest reason partner tech implementations fail?

Internal misalignment is usually the biggest blocker. If IT, operations, security, marketing, or enablement are not aligned early, the implementation can stall before it starts or struggle after launch.

Should you buy a new tool if your current platform already has a roadmap?

Not immediately. First evaluate whether your current vendor can solve enough of the problem through existing or near-term capabilities. Extending a current platform can be easier to approve, cheaper to implement, and less risky than adding another system.

What does “if it’s not in the demo, it doesn’t exist” actually mean?

It means you should validate your exact workflow in a concrete way before buying. Do not assume a vendor’s language maps perfectly to your needs. Ask specific questions, request proof, and make sure the process you care about is truly supported.

Why are partner-created content and AI visibility connected?

Large language models often rely on third-party sources when shaping brand visibility in a category. That means partner-created articles, reviews, and niche publisher content can influence whether your brand shows up, how often it appears, and how accurately it is described.

How should tech partnership teams measure ROI?

Do not stop at partner-sourced revenue. Look at integration installs, retention lift, expansion, marketplace leads, influenced deals, and the effect of adoption by customer cohort. For many integration programs, influence metrics tell the real story.

What is the best way to ask leadership for budget for partner tech?

Bring a de-risked case for change. Show readiness, define the exact problem, map the ask to company priorities, include realistic timelines, spell out resourcing needs, and build a financial model that shows the cost of inaction.

Conclusion

The most useful takeaway here is that partner tech decisions are not software decisions first. They are operating model decisions first.

If you get clear on readiness, align the right internal teams, define success tightly, and phase your investment realistically, you dramatically improve your odds of buying the right thing and getting it adopted. Then AI becomes powerful for what it should be: reducing friction, improving execution, and helping your team scale what already works.

Whether you are evaluating a PRM, trying to improve lead capture, building an integration marketplace, or figuring out how partner-created content affects AI visibility, the same rule applies. Clarity before capability. Readiness before automation. Alignment before implementation.

That is how you avoid creating a bigger mess with better software.